Free Resource

AI Development & Deployment Governance Framework

The compliance and governance framework for organizations building AI-powered products and systems. Navigate EU AI Act requirements, state regulations, bias testing, impact assessments, and audit trails—before they become legal liabilities.

Important distinction: This framework is for organizations building AI systems (AI-powered features, ML models, automated decision tools). If you're managing employees using AI tools like ChatGPT, you want the AI Tool Governance Pipeline instead.

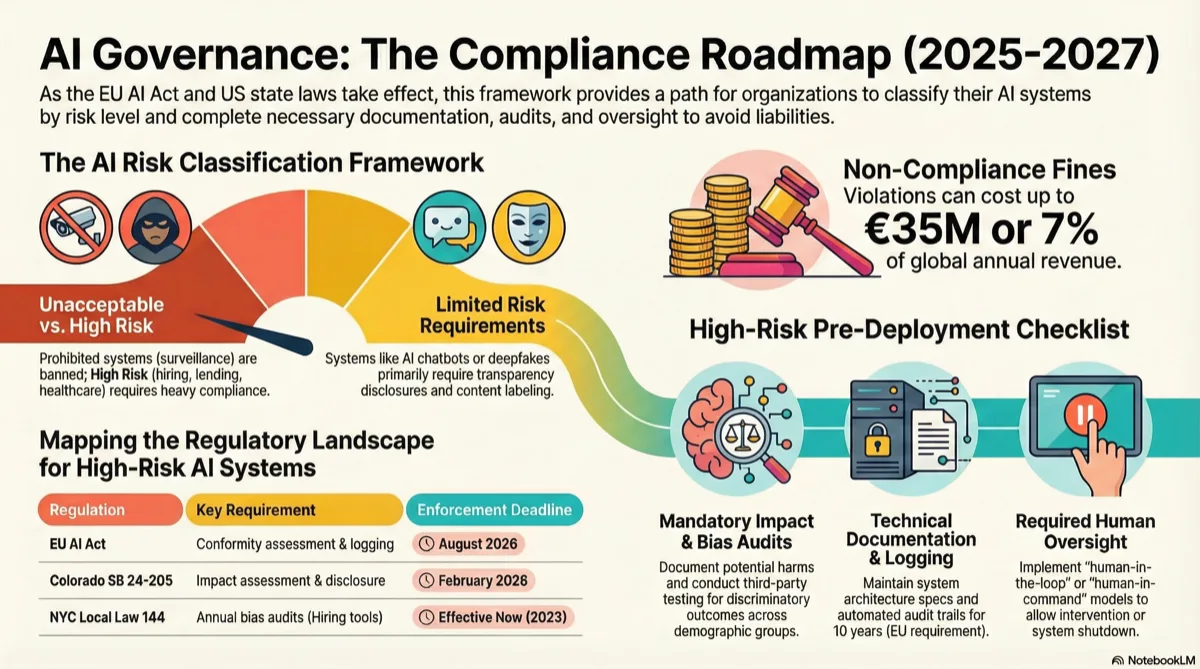

The Regulatory Landscape (2025-2027)

AI governance isn't optional anymore. Between the EU AI Act, state laws, and sector-specific regulations, ignorance is expensive. Here's what's actually enforceable and what you need to track.

EU AI Act

Status: Adopted April 2024. Full enforcement by 2027 (high-risk provisions by August 2026).

Who it affects: Any company offering AI systems in the EU market, regardless of where you're headquartered.

Key requirements:

- • Risk classification (Unacceptable/High/Limited/Minimal)

- • Conformity assessments for high-risk AI

- • Technical documentation and audit trails

- • Human oversight mechanisms

- • Transparency and disclosure requirements

→ Fines up to €35M or 7% of global revenue

US State Regulations

Status: Colorado SB 24-205 effective 2026. California, New York, others active or pending.

Who it affects: Companies deploying "high-risk AI" in specific states.

Notable laws:

- • Colorado SB 24-205: Impact assessments for high-risk AI

- • NYC Local Law 144: Bias audits for hiring AI (effective 2023)

- • California AB 2930: Automated decision tool transparency

- • Multiple states drafting similar frameworks

→ Penalties vary by state; Colorado: up to $20K per violation

GDPR & Privacy Laws

Status: GDPR enforced since 2018. Article 22 applies to automated decisions.

Who it affects: Any AI system processing EU residents' personal data.

AI-specific requirements:

- • Right to explanation for automated decisions

- • Right to human review and contest

- • Data Protection Impact Assessments (DPIA) for AI

- • Lawful basis for processing personal data with AI

→ Fines up to €20M or 4% of global revenue

Sector-Specific Rules

Status: Various federal and state regulations apply based on industry.

Who it affects: Healthcare, finance, employment, housing, insurance sectors.

Key regulations:

- • Healthcare: HIPAA + FDA AI/ML medical device framework

- • Finance: ECOA (fair lending), FCRA (credit decisions)

- • Employment: EEOC guidance on AI hiring tools

- • Housing: Fair Housing Act applies to AI screening

→ Industry-specific penalties + reputational damage

The reality: If you're deploying AI that makes or influences decisions about people (hiring, lending, healthcare, pricing), you're almost certainly subject to multiple overlapping regulations. Compliance isn't about checking boxes—it's about documenting intent, testing for harm, and maintaining audit trails from day one.

AI System Classification Framework

First step: classify your AI system. The EU AI Act uses a 4-tier model. US state laws use similar logic. This determines what documentation, testing, and oversight you need.

Unacceptable Risk

Prohibited uses under EU AI Act

Examples:

- • Social scoring by governments

- • Real-time biometric surveillance in public (with exceptions)

- • Subliminal manipulation causing harm

- • Exploiting vulnerabilities (age, disability)

Governance requirement:

Do not deploy. Period.

If your system does this, stop development immediately and consult legal.

High Risk

Significant potential for harm requiring strict governance

Examples:

- • AI recruiting/hiring tools (NYC Law 144)

- • Credit scoring and loan approval

- • Medical diagnosis or treatment recommendations

- • Law enforcement risk assessments

- • Educational scoring (grades, admissions)

- • Employment management (monitoring, promotion)

- • Access to essential services (housing, insurance)

Governance requirements:

- • Pre-deployment impact assessment

- • Bias audit (annual or per use case)

- • Technical documentation package

- • Human oversight mechanism

- • Ongoing monitoring and logging

- • Conformity assessment (EU)

- • Transparency disclosures

→ See detailed checklist below

Limited Risk

Transparency obligations but lighter compliance burden

Examples:

- • AI chatbots and virtual assistants

- • AI-generated content (text, images, video)

- • Emotion recognition systems

- • Deepfakes and synthetic media

- • Recommendation engines (content, products)

Governance requirements:

- • Disclose AI interaction to users

- • Label AI-generated content

- • Watermark synthetic media (where feasible)

- • Basic documentation of system purpose

→ Focus on transparency, not heavy testing

Minimal Risk

No specific AI regulations apply

Examples:

- • Spam filters

- • AI-powered video games

- • Inventory management algorithms

- • Weather prediction models

- • Non-personalized recommendation engines

Governance requirements:

None mandated. Follow general software best practices.

You can voluntarily adopt codes of conduct or documentation standards, but it's not legally required.

Quick Classification Decision Tree

Does your AI manipulate, exploit, or surveil people in prohibited ways?

→ YES: Unacceptable Risk. Do not proceed.

→ NO: Continue to step 2

Does your AI make or significantly influence decisions about people in sensitive areas?

(Hiring, lending, healthcare, law enforcement, education, essential services)

→ YES: High Risk. Full compliance required.

→ NO: Continue to step 3

Does your AI interact with users, generate content, or create synthetic media?

→ YES: Limited Risk. Transparency required.

→ NO: Minimal Risk. No specific AI governance needed.

High-Risk AI: Pre-Deployment Compliance Checklist

If your system is High Risk, here's what you need before launch. This checklist combines EU AI Act, Colorado law, NYC Local Law 144, and GDPR requirements. One "No" means you're not ready.

1. Pre-Deployment Impact Assessment Completed

Document intended use, potential harms, mitigation strategies, and affected populations.

Minimum required content:

- • Detailed system description (what it does, how it works)

- • Use case and decision-making role (advisory vs. fully automated)

- • Data sources and training data description

- • Reasonably foreseeable risks of harm (bias, errors, misuse)

- • Risk mitigation measures implemented

- • Affected demographic groups and disparate impact analysis

- • Human oversight mechanisms

Template: Colorado requires this for high-risk AI by 2026. EU AI Act Article 27 requires similar.

2. Independent Bias Audit Conducted

Third-party or internal audit testing for discriminatory outcomes across protected classes.

Required testing:

- • Disparate impact analysis by race, gender, age (if data available)

- • Selection rate ratios or impact ratios (NYC: must publish)

- • Confusion matrix metrics (false positive/negative rates by group)

- • Statistical significance testing

- • Audit date, methodology, sample size documented

Frequency:

- • NYC Local Law 144: Annual audit for hiring tools

- • EU AI Act: Audit before deployment + when substantial modifications made

- • Best practice: Annually or when performance degrades

NYC requires publishing summary statistics. EU requires audit trail documentation.

3. Technical Documentation Package Created

Comprehensive documentation of system design, training, testing, and performance.

EU AI Act Annex IV requirements:

- • System architecture and design specs

- • Training data description (sources, labeling, preprocessing)

- • Training methodology and hyperparameters

- • Model performance metrics (accuracy, precision, recall)

- • Validation and testing results

- • Known limitations and failure modes

- • Cybersecurity measures

- • Change log and version control

Retention:

EU: 10 years after system placed on market. US: Varies by sector (often 3-7 years).

4. Model Card Published (Internal or External)

Standardized documentation format for ML model details, intended use, and limitations.

Model card sections (based on Mitchell et al. framework):

- • Model details (version, type, authors, license)

- • Intended use (primary use cases, out-of-scope uses)

- • Factors (relevant groups, environmental conditions)

- • Metrics (performance measures used)

- • Training data (size, composition, preprocessing)

- • Evaluation data (test set description, demographics if relevant)

- • Quantitative analyses (performance by subgroup)

- • Ethical considerations (fairness, privacy, security)

- • Caveats and recommendations (known limitations)

Template available at modelcards.withgoogle.com. Not legally required but considered best practice.

5. Human Oversight Mechanism Implemented

GDPR Article 22 and EU AI Act require human review capability for high-stakes decisions.

Acceptable oversight models:

- • Human-in-the-loop: Human approves each decision before execution

- • Human-on-the-loop: Human monitors system and can intervene

- • Human-in-command: Human can override or shut down system

Documentation required:

- • Which oversight model is used and why

- • Who has oversight authority (roles, training requirements)

- • Intervention triggers and escalation procedures

- • Interface design for human reviewers

6. Automated Logging and Audit Trails Active

EU AI Act Article 12: High-risk systems must enable traceability through logging.

What to log:

- • Input data for each decision (or representative sample)

- • Model output and confidence scores

- • Timestamp and system version used

- • User or system that invoked the model

- • Human override events (if applicable)

- • Errors, exceptions, and edge cases

Retention and access:

- • Logs retained per regulation (typically 3-10 years)

- • Accessible to auditors and regulators on request

- • Protected from tampering (append-only, cryptographic signing)

7. User-Facing Transparency Disclosures

Inform affected individuals that AI is used and provide meaningful information about it.

Required disclosures:

- • Clear notice that AI is making or assisting with decisions

- • Purpose and general logic of the AI system

- • Right to contest or request human review (where applicable)

- • Contact for questions or complaints

Example (NYC hiring tool):

"This employer uses an automated employment decision tool (AEDT) to assist in hiring decisions. The tool analyzes resume keywords and scores candidates based on relevance to the job description. An independent bias audit was conducted on [date]. You have the right to request an alternative selection process or accommodation. For questions, contact [email]."

8. Post-Deployment Monitoring Plan Established

EU AI Act Article 61: Continuous monitoring required for high-risk AI in operation.

Monitoring plan must include:

- • Performance metrics tracked (accuracy, error rates)

- • Drift detection (data drift, concept drift monitoring)

- • Bias metrics tracked over time (same as initial audit)

- • Incident reporting procedures (what triggers investigation)

- • Review cadence (monthly, quarterly, or trigger-based)

- • Responsible team and escalation path

Trigger for re-audit:

- • Statistically significant performance degradation

- • User complaints exceed threshold

- • Substantial modification to model or data

- • Annual review (per NYC Law 144 or internal policy)

Deployment readiness: All 8 checkboxes must be complete before launching a high-risk AI system in regulated markets. If you're missing any, you're not compliant. The cost of getting this wrong isn't just fines—it's reputational damage, lawsuits, and rebuilding trust.

AI System Inventory & Registry Template

You can't govern what you can't see. Maintain a living inventory of all AI systems in development or production. Colorado law and EU AI Act both implicitly require this.

Inventory Fields (Track for Each AI System)

| Field | Description | Why It Matters |

|---|---|---|

| System Name | Internal identifier | Unique reference for documentation |

| Owner/Product Team | Team responsible for system | Accountability and contact point |

| Risk Classification | Unacceptable / High / Limited / Minimal | Determines compliance requirements |

| Use Case | What the system does | Justifies risk classification |

| Deployment Status | Research / Dev / Staging / Production | Compliance kicks in at production |

| Deployment Date | When system went live | Audit schedule and retention dates |

| Affected Geographies | EU / US (which states) / Other | Which regulations apply |

| Impact Assessment | Link to document or "N/A" | Required for High Risk systems |

| Last Bias Audit | Date of most recent audit | Track compliance with annual requirements |

| Technical Docs | Link to documentation package | EU AI Act Annex IV requirement |

| Human Oversight Model | In-loop / On-loop / In-command / None | GDPR Article 22 compliance |

| Logging Enabled | Yes / No | EU AI Act Article 12 requirement |

| Next Review Date | Scheduled re-audit or monitoring review | Ongoing compliance tracking |

Governance tip: Store this inventory in a shared system (Airtable, Notion, internal wiki) accessible to Legal, Compliance, Product, and Engineering. Review quarterly. Add new systems as they move from dev to staging. This becomes your compliance dashboard.

AI Incident Response Procedures

When your AI system fails, discriminates, or causes harm, response speed matters. EU AI Act Article 62 requires reporting serious incidents to regulators. Here's your playbook.

What Qualifies as an AI Incident?

Technical Failures:

- • Model performance drops below acceptable threshold

- • Data drift causing erroneous predictions

- • System produces unexplained outputs

- • Security breach exposing model or data

Harm-Based Incidents:

- • Discriminatory outcomes detected post-deployment

- • User harm (financial, reputational, physical)

- • Regulatory violation discovered

- • Media or public attention to system failures

5-Step Incident Response Protocol

Detect & Triage (Hour 0-2)

Actions:

- • Incident logged in tracking system with timestamp

- • Severity assessed (Critical / High / Medium / Low)

- • AI system owner notified immediately

- • Preliminary impact assessment (how many users affected?)

Critical severity trigger: Immediate halt/rollback if ongoing user harm.

Contain & Mitigate (Hour 2-24)

Actions:

- • Decision on system status (continue / modify / suspend / shut down)

- • If suspended: notify affected users and stakeholders

- • Preserve logs and evidence (do not delete or modify)

- • Activate human oversight or fallback procedures

- • Legal and Compliance teams briefed

Investigate Root Cause (Day 1-7)

Actions:

- • Review logs and system behavior leading to incident

- • Reproduce issue in test environment if possible

- • Identify root cause (model, data, code, human process)

- • Document findings in incident report

- • Assess whether similar issues exist in other AI systems

Remediate & Test (Day 7-30)

Actions:

- • Implement fix (retrain model, update data, change logic)

- • Re-run bias audit and impact assessment if High Risk system

- • Test fix in staging environment

- • Validate that fix doesn't introduce new issues

- • Update technical documentation with changes

Redeploy & Monitor (Day 30+)

Actions:

- • Gradual rollout with enhanced monitoring

- • Communicate fix to affected users (if applicable)

- • Report to regulators if required (EU: serious incidents within 15 days)

- • Post-incident review with cross-functional team

- • Update incident response procedures based on lessons learned

EU AI Act reporting requirement: Article 62 mandates that providers of high-risk AI systems report serious incidents to national authorities within 15 days. "Serious" means incidents leading to death, serious health issues, or serious fundamental rights violations. Establish your reporting chain now—don't wait for an incident to figure out who calls the regulator.

Regulatory Compliance Quick Reference

Different regulations, different requirements. Use this table to map your AI system to applicable laws.

| Regulation | Applies To | Key Requirement | Deadline |

|---|---|---|---|

| EU AI Act | High-risk AI in EU market | Conformity assessment, technical docs, logging | Aug 2026 (high-risk) May 2027 (all) |

| Colorado SB 24-205 | High-risk AI deployed in Colorado | Impact assessment, disclosure, risk management | Feb 2026 |

| NYC Local Law 144 | Automated employment decision tools (NYC) | Annual bias audit, publish summary stats | Effective July 2023 |

| GDPR Article 22 | Automated decisions affecting EU residents | Right to human review, right to explanation | Active since 2018 |

| ECOA (Fair Lending) | AI used in credit decisions (US) | Adverse action notices, non-discrimination | Active |

| FCRA | AI credit reporting or screening (US) | Accuracy, dispute process, disclosure | Active |

| FDA AI/ML Guidance | AI medical devices (US) | Premarket review, algorithm change protocol | Active (evolving) |

Not sure which applies to you? Start with geography (where are your users?) and use case (what decisions does your AI make?). If you're making decisions about people in sensitive areas—hiring, lending, healthcare—assume multiple regulations apply and consult legal counsel.

Building AI Systems? Don't Navigate Compliance Alone.

The regulatory landscape is complex and penalties are severe. Whether you need help classifying your AI systems, running bias audits, building impact assessments, or preparing for EU AI Act compliance, I can help you get it right before launch.

Related Resources: